Machine Learning Engineer

Philip Spanoudes

Work Experience

Block, Inc

Apr 2019 - Oct 2024

Tech lead on the Square Measurement Science team. The team is responsible for all forecasted internal metrics that are utilized from multiple teams across Square for quarterly/annual planning and investment portfolio optimization.

- Consulted on the design & development of various projects within the team. Helped by identifying possible gaps/roadblocks and suggesting ways on how to overcome these risks.

- Formed a small independent team of MLE's within Square and contributed to the design and implementation of a framework for deploying trained ML models as a service.

- Enhanced existing merchant value forecast models to take into account the observed effects as well as speculated effects of the COVID-19 pandemic.

- Built a framework that facilitates the training of predictive models that are used to forecast a merchant's value across various Square products.

- Improved merchant churn prediction model, significantly decreasing the false positive rate in the upper score range. This in turn increased the amount of relevant case generation for the merchant retention program.

Point Up, Inc

Feb 2022 - Aug 2022

Part of the initial ML team at Point, specifically hired to help build out the line sizing methodology for the upcoming charge card product (Titan), as well as to help kickstart the ML infrastructure and broader ML standards at Point.

- Consulted on the selection and vetting of potential third party data partners that would ultimately be used to facilitate the underwriting and line sizing methodologies for the upcoming charge card product.

- Designed and built ML tooling that was actively utilized by the rest of the data team at Point.

- Implemented various daily user related features (signals), specifically around transactions and account balances that were used for internal monitoring and development of predictive models.

Block, Inc

May 2016 - Apr 2019

Part of the initial Square Capital Data Science team that was tasked with the continual optimization of all lending product design, marketing strategies and servicing techniques.

- Improved the internal rate of return (IRR) forecasting model for the Capital Flex product.

- Designed and implemented a product simulation framework that is used to evaluate the effects of model swaps and loan facilitation methodology alterations.

- Designed and implemented a heuristic optimization framework for product eligibility that searches for optimum threshold configurations based on expected loss and volume.

- Designed and implemented an email servicing model for automated solution suggestions to email inquiries.

- Designed and implemented a Capital acceptance model for the marketing team that uses merchant event patterns to determine the probability of product acceptance.

Framed Data

May 2015 - May 2016

Hired as Framed Data's principal research scientist with the sole task of improving the company's churn prediction algorithms for each customer.

- Researched and implemented a novel machine learning pipeline for arbitrary customer churn prediction.

- Invented a generalized data representation architecture that can be applied on different raw event company data.

- Implemented a state-of-the-art Deep Learning architecture that effectively decomposed complex user event patterns and ultimately increased prediction accuracies.

Education

Lancaster University (UK)

Oct 2014 - Nov 2015

Best Overall Student Performance Award: Prestigious award in recognition for best student performance across all degree modules.

University of Portsmouth (UK)

Sep 2010 - Jun 2014

Projects

Attention Fusion Networks: Combining Behavior and E-mail Content to Improve Customer Support

Jun 2018 - Nov 2018

Research conducted at Square Capital for the purpose of automatically suggesting solutions to customer email inquiries. The research yielded a novel deep learning architecture that combines two disparate data sources when estimating its predictions.

Deep Learning in Customer Churn Prediction: Unsupervised Feature Learning on Abstract, Company Independent Vectors

May 2015 - Aug 2015

Initial research performed for Framed Data as part of the MSc Data Science Degree dissertation at Lancaster University. The research work conducted proved that Deep Learning can be successfully applied in the field of customer churn prediction by yielding better prediction results while also bypassing the tedious feature engineering phase in a traditional machine learning pipeline.

Skills

- Machine Learning

- Deep Learning

- Feature Engineering

- Python

- Java

- C++

- Apache Spark

- C#

Software Developer & Data Scientist

Alexis Mattos-Vabre

Work Experience

Articulate Research

Feb 2016 - Current

- Used Caffe, Theano (Keras), Lasagne, scikit-learn, TensorFlow and custom algorithms to complete projects.

- Used libraries/tools such as D3 and Processing to create data visualizations

- Research in current social problems using public data (Basic Income & Poverty in the San Francisco Bay Area, Time-Banking)

- Policy development through data analysis and predictive analytics

KINKBNB

Jan 2016 - Current

- Created new rental reservation system in Django/Python with a fully integrated CMS, analytics and data visualization.

- Implemented the use of Docker and MicroServices.

- Managed and created sprints in SCRUM methodology.

- Created migration plan for legacy PHP system to new Django stack.

Figure8Labs

Jun 2008 - Current

Web, marketing, and communications consulting as well as development, design, and UX design.

- Clients include: Chanel, LVMH, Au Feminin, Le Manoir de Paris, Barbara Bui, FITC, Circus Automatic, Sensoree

Circus Automatic

Jun 2014 - Aug 2016

- Networked wall of 45 LCD screens using Raspberry PIs and software in python

- Managed and developed code base for robotics development

MediaPilote Paris

Mar 2010 - Mar 2011

- Advise and develop technical strategies for web and HCI projects

- Develop custom modules for the branded CMS using php, ajax, and css.

- Integrate creative and technical concepts, and advise on technical feasibility and cost.

Logikart

Dec 2007 - Jun 2008

- Created Modules in PHP and AJAX primarily to extend OScommerce.

- Maintained the webstore and servers for LOLLIPOPS, a webshop with an international presence (with over 100 physical boutiques worldwide)

Education

University of California, Santa Cruz

Sep 2000 - May 2005

General Assembly

Feb 2016 - May 2016

Udacity

Jul 2016 - Current

I'm currently pursuing a certificate program in Machine Learning and participating in the Beta version of the Self-driving car programming nanodegree

Skills

- predictive analytics

- statistical analysis

- python

- algorithm development

- php

- data visualisation

- splunk

- ux design

- scikit-learn

- keras

- caffe

- data visualization

- data analytics

Resume Writing Tips

The following tips are based on what we've learned from helping over 100,000 job seekers create a resume with our resume builder.

Resume Format and Sections

Impressing recruiters and hiring managers will be much easier if you include the info they are looking for, in the correct order. Here are the sections and order we recommend.

- Contact information: This should be at the top of your resume and easy to find. Make sure to include your email and phone number.

- Work experience section: This is the most important part of your resume. Focus on your work experience that is most relevant to the job description you're applying for and use short bullet points.

- Education section: Make sure to list any degrees or certificates that the job posting requests. Only include your GPA and courses if you're applying for entry-level jobs.

- Other sections: You can also include skills, languages, patents, side projects, etc if they are relevant to the job you're applying for. It's generally best to avoid references. Recruiters will ask for them later and they take up valuable space.

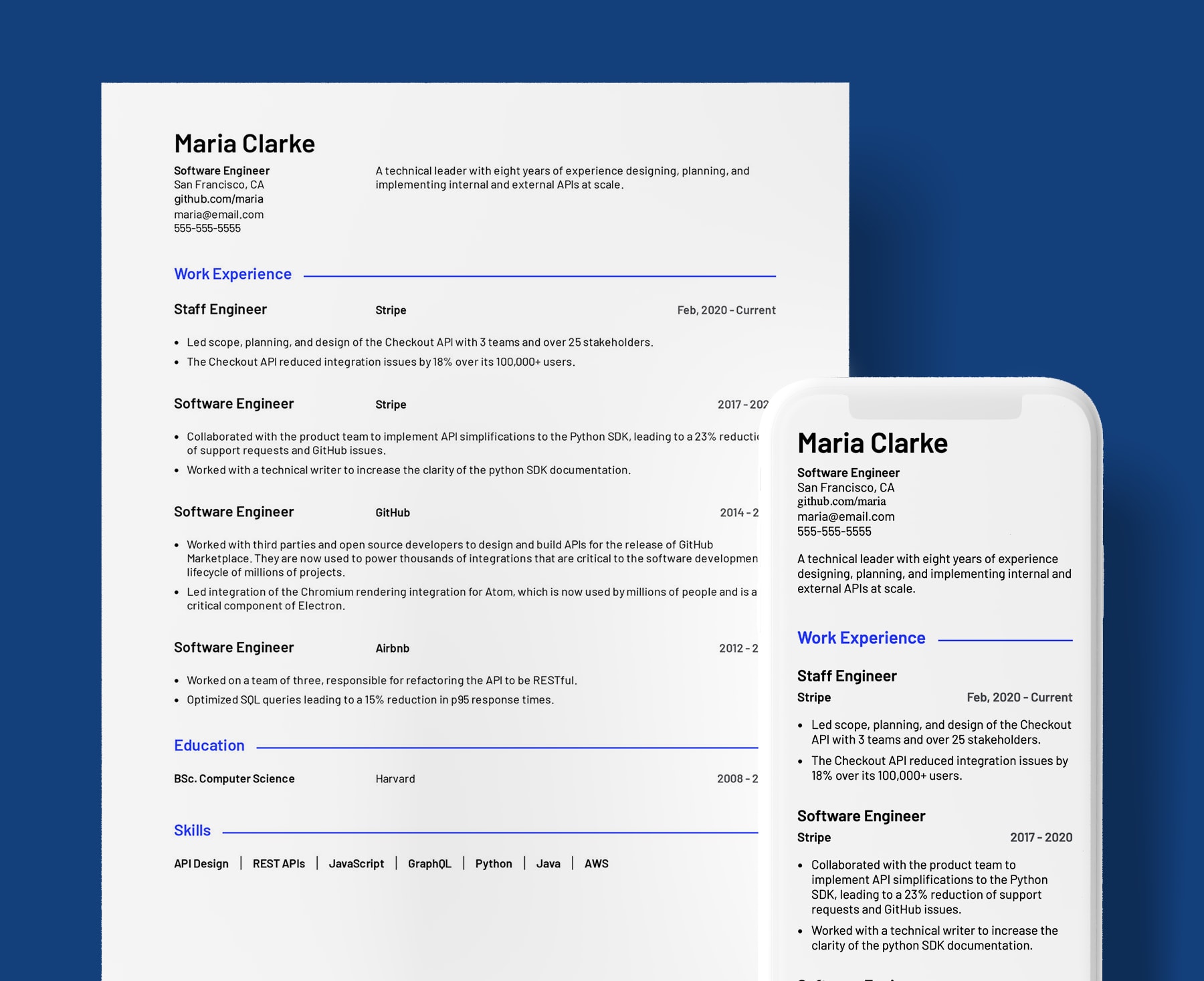

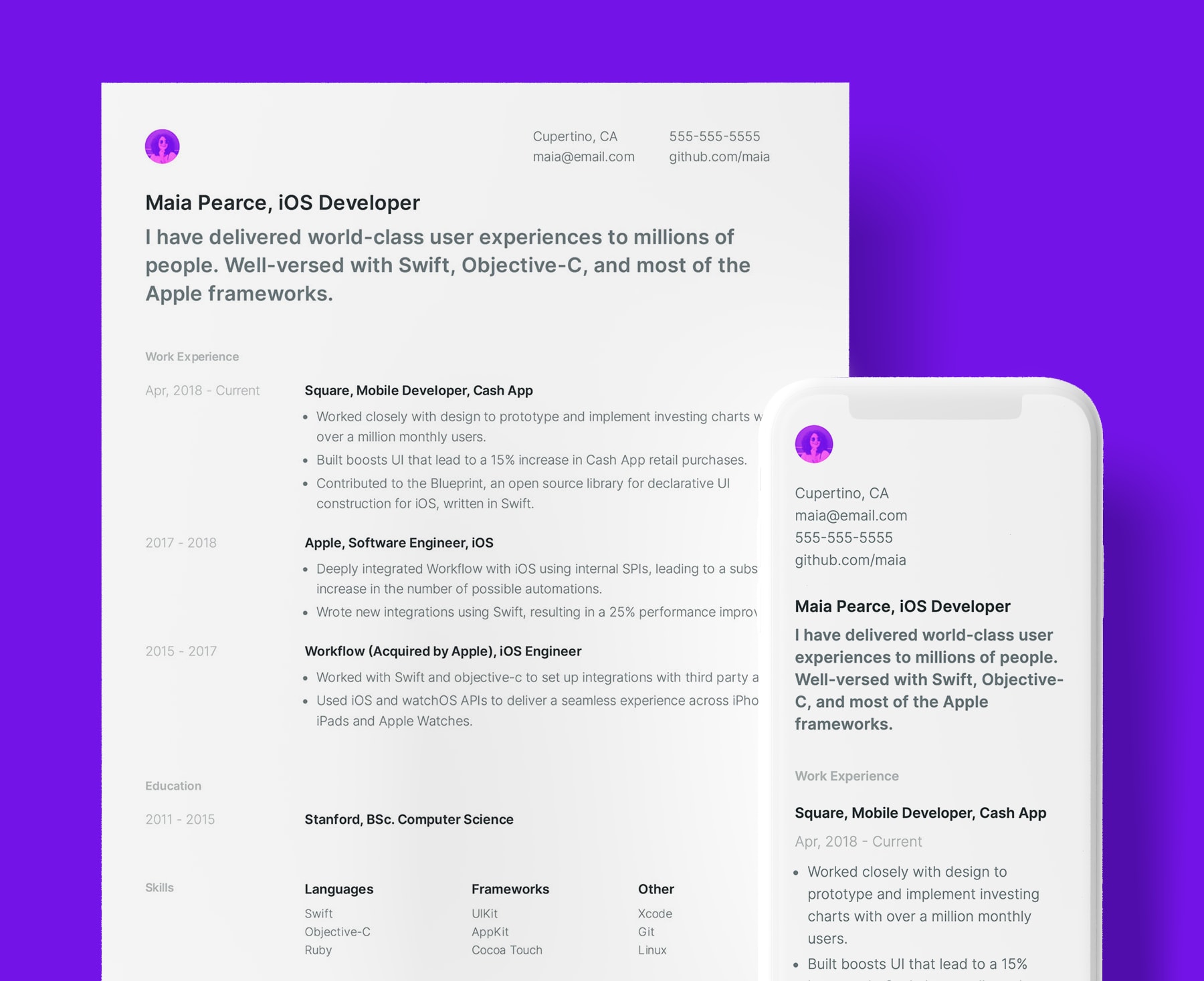

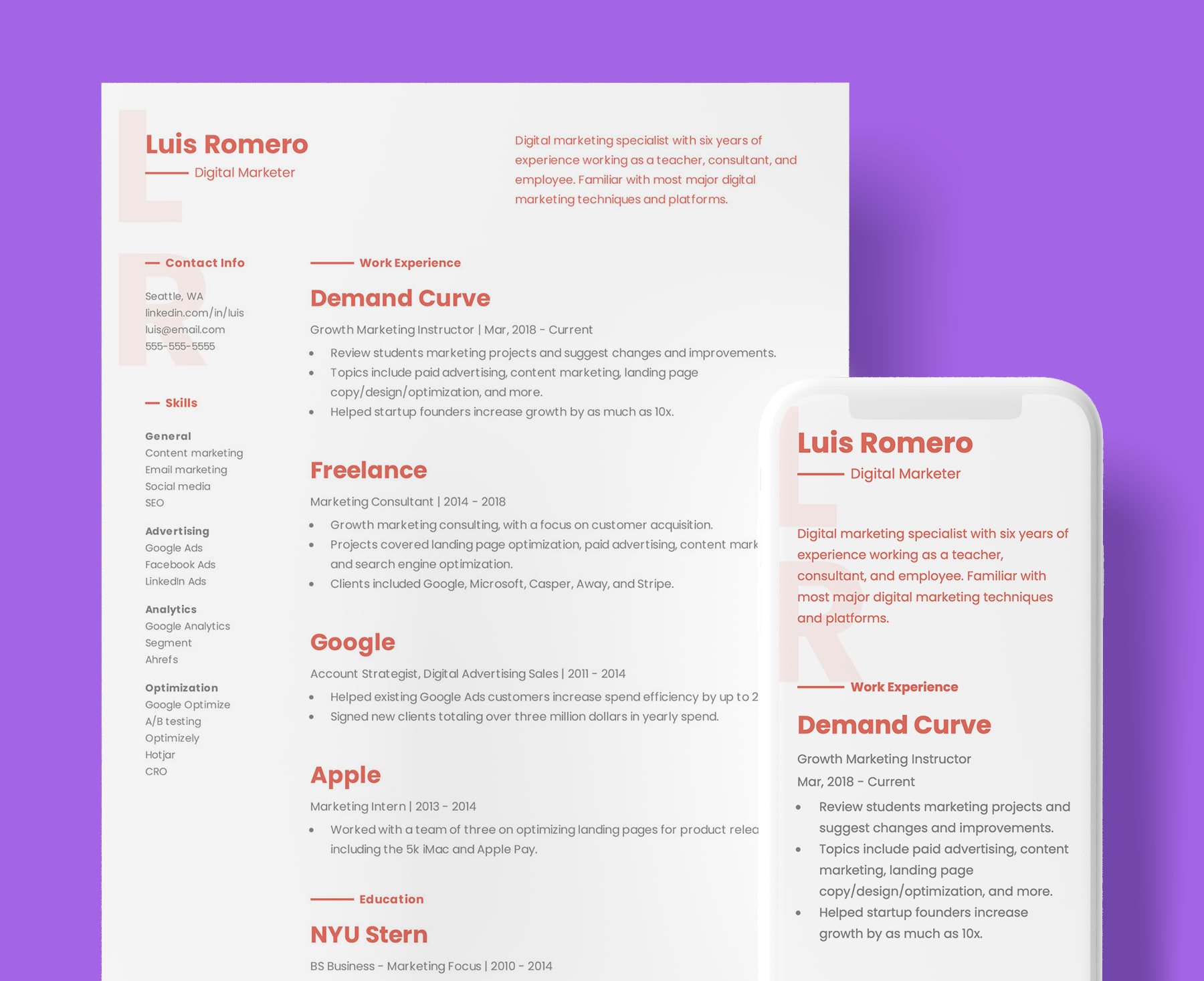

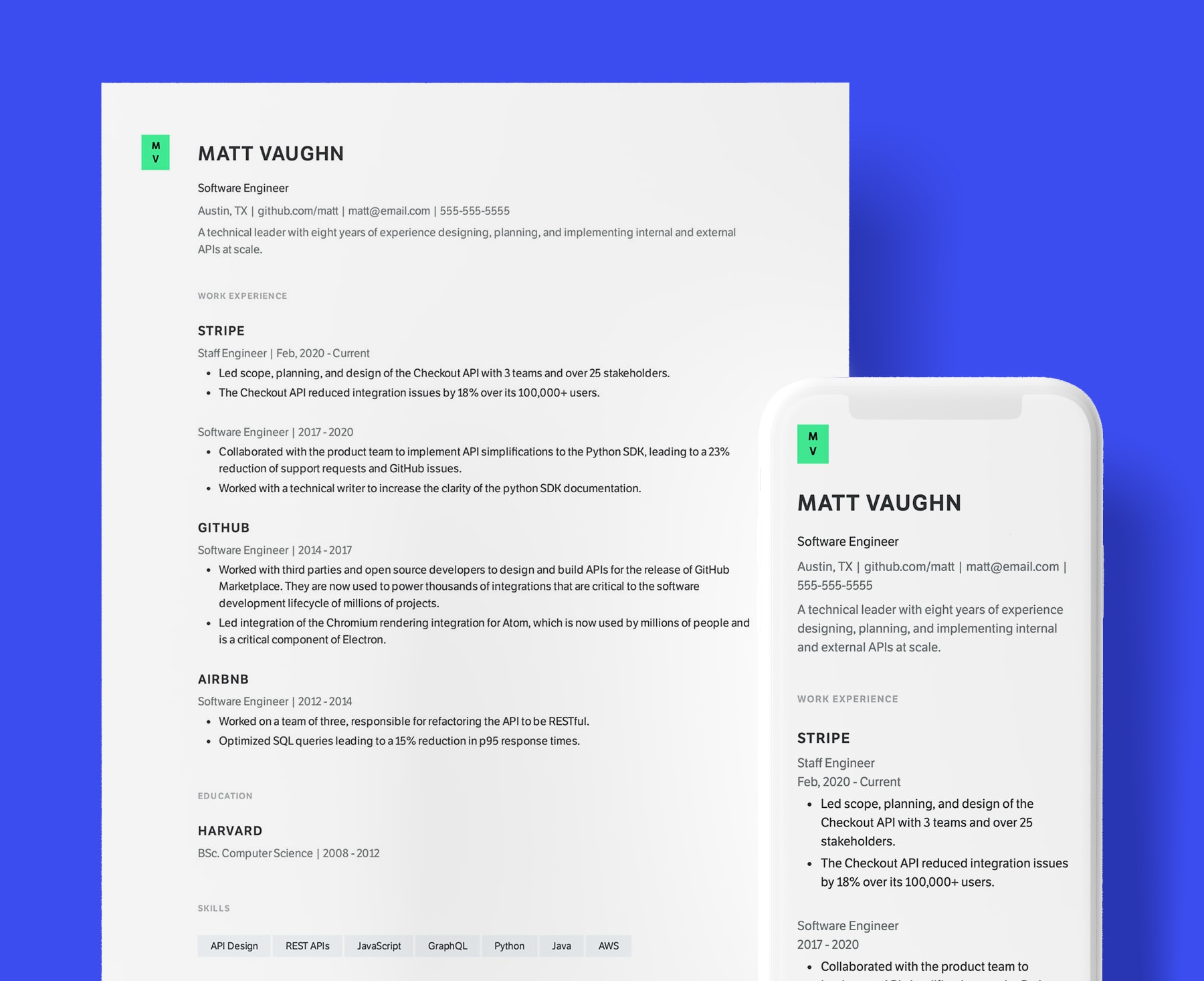

Resume Template

While the contents of your resume are undoubtedly the most important part, a good resume template can increase your odds of getting an interview. Most requiters only spend a few seconds on their initial resume scan, you need to make them count.

We've taken the guess work out of picking a resume template. Our templates are designed in collaboration with recruiters and hiring managers, and are styled from professional to modern.

If the job application requires a cover letter, it should have similar formatting to your resume.

Data Scientist Skills

Skills are an important part of a data science resume. You should analyze the job description you're applying for and make sure to include the requested skills in your work experience and skills sections. Focus on technical skills and omit soft skills like "problem solving". Recruiters ignore them.

Here are some skills that are frequently included in data science job descriptions on our remote job board.

- Python

- Scala

- Perl

- R

- SQL

- NoSQL

- Machine learning

- NLP

- Big data

- Regression

- Hadoop

- Tableau

- Algorithms

- Data sets

- GitHub

- Data management

- Data processing

- Data modeling

- Random forest

- SAS

- Hive

- Large data sets

- Microsoft Azure

- Amazon web services (AWS)